ChatGPT Enterprise has quickly become a buzzword in boardrooms and team meetings. Companies are rushing to empower their software developers, HR managers, and analysts with this AI assistant in hopes of boosting productivity and innovation.

But amidst the excitement, one pressing question looms: How do we actually measure the business impact of ChatGPT Enterprise?

Implementing cutting-edge AI is one thing, demonstrating its tangible value to the business is another. Measuring that impact is crucial to ensure that adopting ChatGPT Enterprise isn’t just a leap of faith, but a data-driven success.

Investing in generative AI at an enterprise scale is a significant decision. Like any major investment, executives and stakeholders want to see a return. In fact, recent surveys reveal a gap between AI hype and reality. A study by BCG found 74% of companies have yet to demonstrate tangible value from their AI deployments. Even more concerning, 71% of organizations reported that AI has increased workload and decreased productivity in early implementations.

These findings underscore that simply deploying AI tools doesn’t guarantee positive outcomes. Without clear metrics and continuous monitoring, companies risk not getting the efficiency gains they hoped for – or worse, introducing new inefficiencies.

Measuring impact is important for several reasons:

In short, you can’t manage what you don’t measure – and that adage holds true for enterprise AI initiatives. Let’s explore what exactly we should be measuring and how to do it.

What should you measure to know if ChatGPT Enterprise is truly delivering value? The answer will vary by organization, but several core categories of metrics have emerged as most useful. Below, we break down key metrics that map to the benefits and challenges discussed. These metrics will help quantify the impact in terms that resonate with both technical teams and business stakeholders:

How many people are actually using ChatGPT Enterprise? Active usage metrics include the number of AI sessions or prompts per user per week. Higher usage indicates that the tool is becoming increasingly embedded in daily workflows. Low or dropping usage could signal issues (lack of awareness, poor UX, or users not finding good use cases).

These metrics measure the impact of ChatGPT Enterprise on the pace and volume of work. They often require comparing baseline performance (before AI or without AI) to performance with AI. Examples include:

Remember that not all metrics will be equally important to every stakeholder. An executive might focus on ROI and high-level productivity statistics, while a people analytics leader will be interested in adoption patterns and employee engagement. The key is to select a balanced set of metrics that, together, paint a comprehensive picture of ChatGPT Enterprise’s impact from multiple angles.

Identifying metrics is half the battle – you also need a strategy to collect and analyze the data consistently. Here are some strategies and best practices to effectively measure the business impact of ChatGPT Enterprise:

1. Establish a Baseline

Before (or as) you roll out ChatGPT Enterprise, capture baseline data for the metrics of interest. For example, what is the average resolution time in support before AI, or how many blog posts can Marketing write per month pre-AI?

Baselines provide a comparison point so you can quantify improvement. If you’ve already launched without baselines, don’t worry – you can use historical data or even run a small control group without AI for a short period as a proxy.

2. Use Pilot Programs and A/B Testing

Rather than deploying to everyone on day one, some organizations roll out AI to a pilot group or specific departments first. This creates a natural experiment – compare the pilot group’s metrics to those of a control group that hasn’t yet adopted ChatGPT.

If the pilot group shows significantly faster or better work outputs, that’s strong evidence of impact. This phased approach also helps iron out issues before company-wide deployment.

3. Leverage Analytics Tools and Telemetry

Many companies utilize analytics platforms to automate this process. For example, Worklytics is one such platform that specializes in aggregating workplace tool usage (including AI tools) into meaningful metrics.

By connecting to ChatGPT’s enterprise API or admin console, it can pull usage stats and combine them with other work data. The idea is to automatically feed data into dashboards rather than relying purely on manual reporting or surveys. Whichever approach you use, ensure that it’s continuous – real-time or at least weekly data updates will let you spot trends and react quickly.

4. Maintain Employee Privacy and Trust

As you collect data, be transparent about what you are measuring and why. Emphasize that measurement is about aggregate trends and business outcomes, not individual surveillance or evaluating someone’s performance. Use techniques like anonymization and aggregation (e.g. metrics by team, not by person) to protect privacy.

By designing your measurement approach with privacy in mind from the start (much like how Worklytics employs a privacy-first design), you both comply with regulations and gain employee buy-in. When people understand that the goal is to help them succeed with AI, they are more likely to participate honestly in surveys and embrace measurement as a positive thing.

5. Tie Metrics to Business Outcomes

Whenever possible, connect the ChatGPT metrics to broader business KPIs. For instance, if you notice a 20% reduction in document drafting time in R&D, did product development timelines also speed up?

Making these connections will strengthen the narrative of AI’s value. It also helps in identifying any lag indicators – maybe you improved an efficiency metric, but it takes a quarter or two to see the financial results.

6. Iterate and Refine: Treat the measurement process itself as something you can improve. As you gather data, you might discover that some metrics aren’t telling the full story or that teams are gaming a metric at the expense of something else.

Don’t be afraid to refine your KPIs.

You might consider adding a new metric, such as “percentage of client deliverables that had AI involvement,” as your usage matures.

By following these strategies, measuring the impact of ChatGPT Enterprise becomes an ongoing part of your AI adoption journey, rather than a one-time audit. The goal is to create a feedback loop: use insights from the metrics to continually optimize how your organization uses ChatGPT, which in turn will improve those metrics over time.

Rather than building custom dashboards from scratch, Worklytics provides an out-of-the-box platform to monitor how generative AI is being used across your company and what results it’s driving.

So what can Worklytics offer in the context of ChatGPT Enterprise? In a nutshell, Worklytics connects to the software tools your employees use – from messaging apps to code repositories – and extracts insights about collaboration, productivity, and now AI usage.

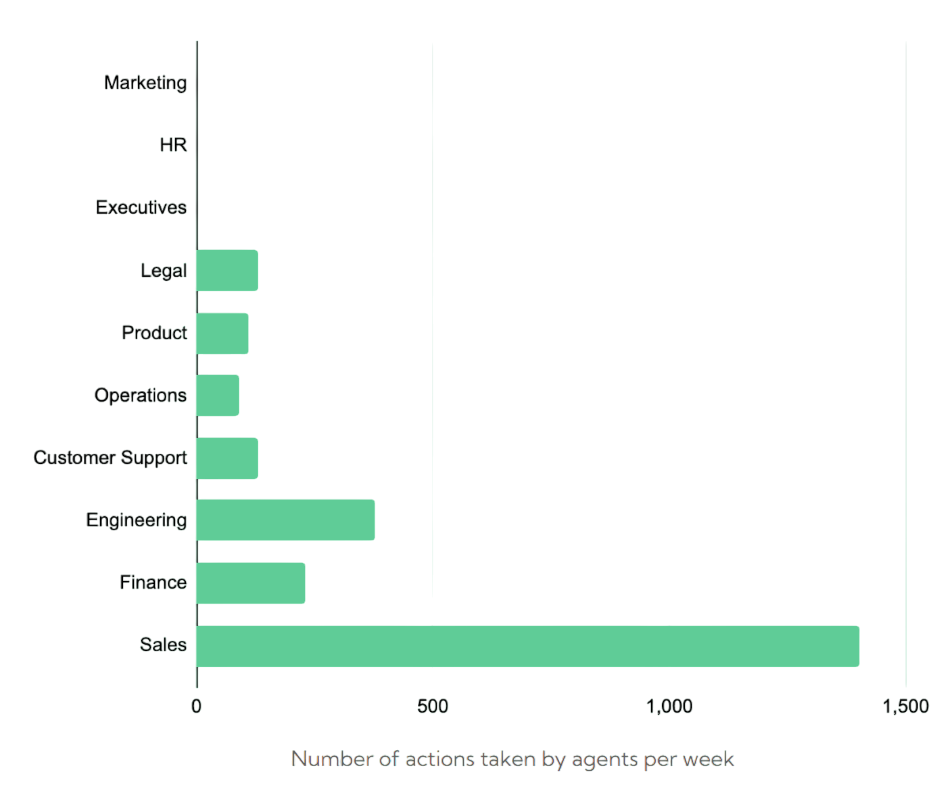

For AI specifically, Worklytics offers an AI adoption analytics dashboard that aggregates data from all your AI tools (such as ChatGPT, Microsoft 365 Copilot, and Slack’s GPT integrations) into a unified view. This means you can track usage by team and role, seeing which departments are embracing ChatGPT and how frequently it’s used.

Worklytics also helps in demonstrating ROI from AI. By providing metrics like activation rates, frequency of use, and even correlating usage with productivity indicators, it becomes easier to quantify the impact. For example, Worklytics can show that the Engineering department’s output increased in tandem with their high adoption of GitHub Copilot and ChatGPT – a strong signal of productivity gains linked to AI.

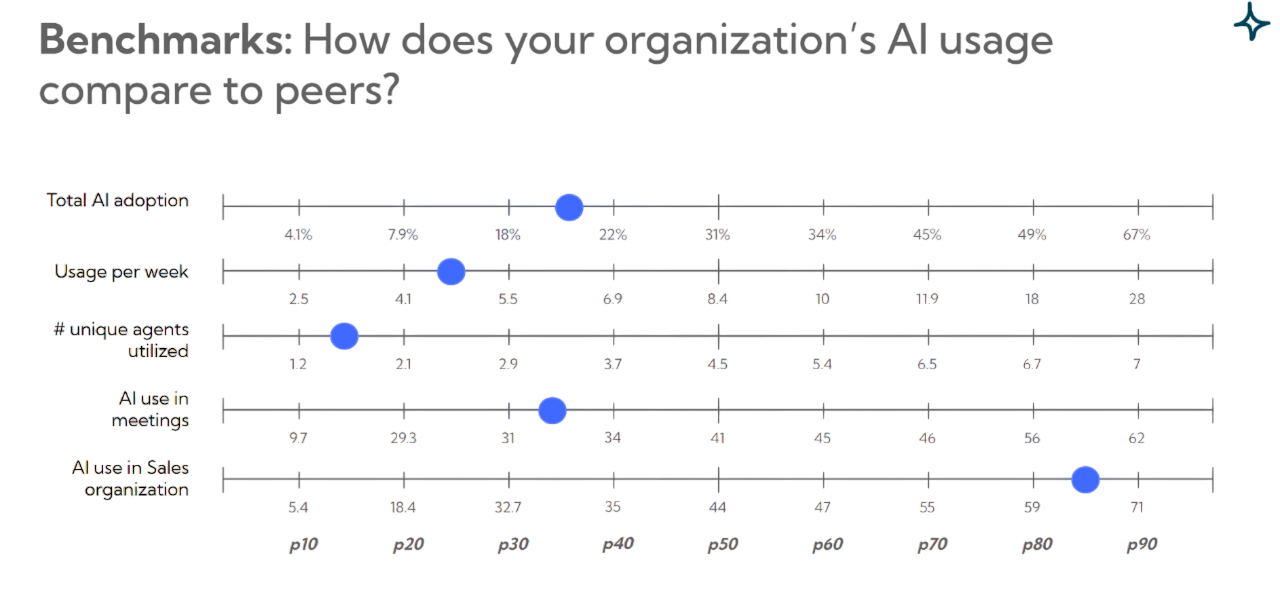

One of Worklytics’ features even includes benchmarking AI adoption against industry peers, so you can see if you’re ahead of the curve or lagging, which adds context to your internal metrics.

Another powerful capability is leveraging Organizational Network Analysis (ONA) with AI in mind. Worklytics can map out how AI tools like ChatGPT are woven into the fabric of collaboration. For instance, it might reveal that a certain team extensively uses an AI assistant in project meetings or design reviews, leading to faster knowledge sharing.

Crucially, all of this is done with privacy in focus. Worklytics employs data anonymization and aggregation techniques to ensure you get the insights without exposing any private conversation contents or personal data. For example, it might report that “Team A had a 60% increase in ChatGPT usage month-over-month” without disclosing what individuals asked ChatGPT or any confidential text. This approach aligns perfectly with the need to measure impact responsibly – employees’ trust isn’t compromised, and compliance teams remain happy.

In essence, Worklytics serves as a deeper measurement tool for ChatGPT Enterprise. ChatGPT provides the AI capabilities to transform how work gets done, and Worklytics provides the measurement and analytics to ensure those changes are positive and aligned with business goals.

By using a platform like Worklytics, organizations can move beyond gut feel or isolated anecdotes – they gain a data-driven, real-time understanding of their AI adoption. This enables continuous improvement: doubling down on successful use cases, identifying and removing obstacles to adoption, and ultimately achieving the maximum return on their AI investments.

Discover how Worklytics can help you measure and maximize the real impact of ChatGPT Enterprise, start your insights journey today.